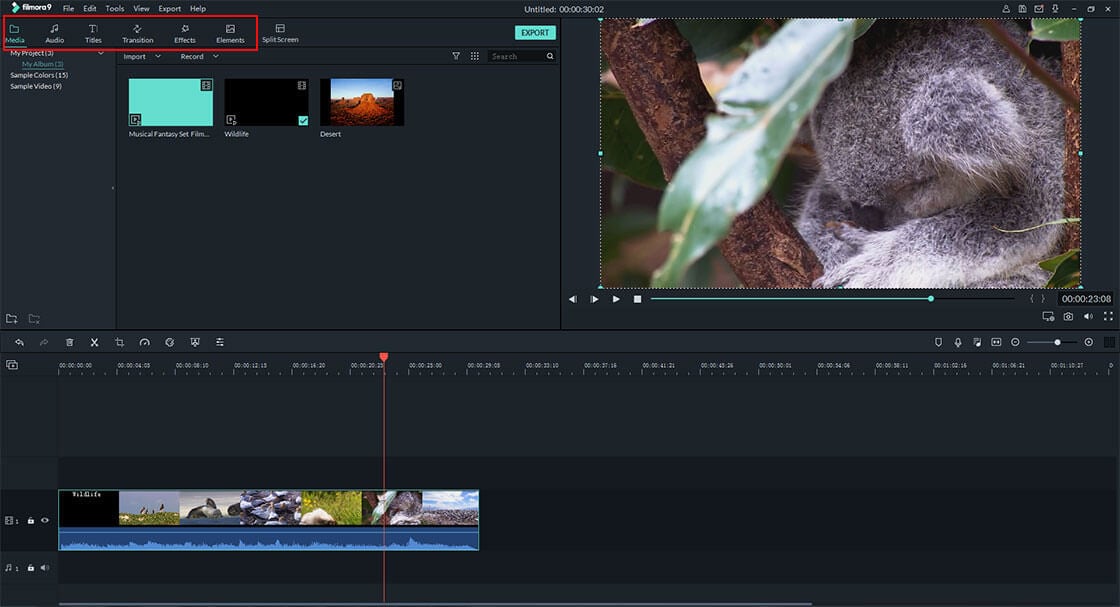

- Visual effects software manual#

- Visual effects software full#

- Visual effects software software#

- Visual effects software plus#

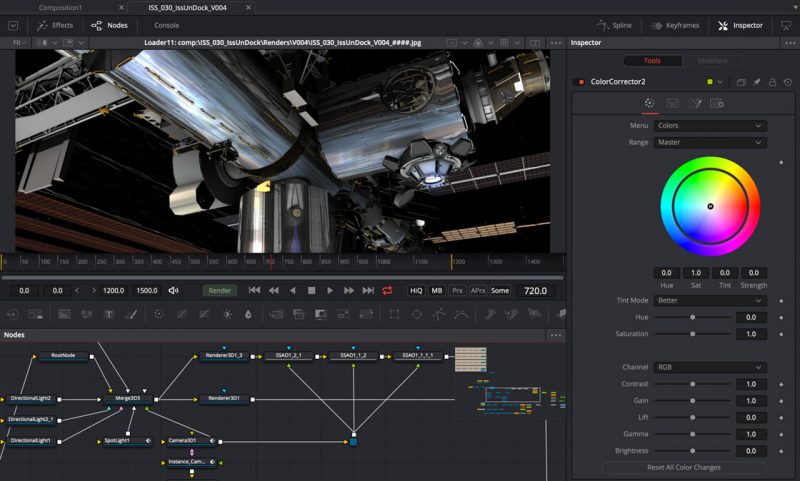

On the shoot Cyclops stitched 360-degree camera footage and transmitted this live to the Unreal engine producing an augmented reality image of the virtual object tracked and composited into the scene using computer vision technology from Arraiy. ‘The Human Race’ combined Epic’s Unreal game engine, The Mill’s virtual production toolkit Cyclops and Blackbird, an adjustable car rig that captures environmental and motion data. The future of filmmaking is AI and RealtimeĪ proof-of-concept led by facility The Mill showcased the potential for real-time processes in broadcast, film and commercials productions. The accuracy is limited to the quality of the deep learning model behind Rotobot but will improve as it feeds on more data. Using its AI, the company says a frame can be processed in as little as 5-20 seconds. Rotoscoping, another labour-intensive task, is being tackled by Australian company Kognat’s Rotobot. “If a studio has good access to what is happening on set, it’s easier to explain what they need and why without causing alarm.” “A lot of this comes down to the relationship between client and studio,” he says.

Barber reckons this shouldn’t be too difficult.

Visual effects software software#

Lead software engineer Alastair Barber says the results have improved the matchmoving process by 20% and proved the concept by training the algorithm on data from DNEG, one of the world’s largest facilities.įor wider adoption studios will have to convince clients to let them delve into their data. Software developer Foundry has a new approach using algorithms to more accurately track camera movement using metadata from the camera at the point of acquisition (lens type, how fast the camera is moving etc).

Visual effects software manual#

It can be a frustrating process because tracking camera placement within a scene is typically a manual process and can sap more than 5% of the total time spent on the entire VFX pipeline. Matchmoving, for example, allows CGI to be inserted into live-action footage while keeping scale and motion correct. More recently the VFX industry has focussed most of its efforts on creating more cost-effective, efficient, and flexible pipelines in order to meet the demands for increased VFX film production.įor a while, many of the most labour intensive and repetitive tasks such as match move, tracking, rotoscoping, compositing and animation, were outsourced to cheaper foreign studios, but with the recent progress in deep learning, many of these tasks can be not only fully automated, but also performed at no cost and extremely fast.Īs Smit explains: “Data is the foundational element, and whether that’s in your character simulation and animation workflow, your render pipeline, or your project planning, innovations are granting the capability to implement learning systems that are able to add to the quality of work and, perhaps, the predictability of output.” There are very few effects that are impossible to create, given sufficient resources (artists, money), including challenges such as crossing the uncanny valley for photorealistic faces.

Over the past decade 3D animations, simulations and renderings have reached a fidelity in terms of photorealism or art-direction that is near perfection to the audience. Mari 4.5: Painting package for VFX artists developed by Foundry

Visual effects software plus#

Simon Robinson, co-founder at VFX tools developer Foundry says: “The change in pace, the greater predictability of resources and timing, plus improved analytics will be transformational to how we run a show.” “Over the long-term, these technologies will radically change how content is created.” Michael Smit, Ziva Dynamics “Over the long-term, these technologies will radically change how content is created.” “A combination of physics simulation with AI/ML generated results and the leading eye and hand of expert artists and content creators will lead to a big shift in how VFX work is done,” says Michael Smit, CCO of software makers Ziva Dynamics.

Visual effects software full#

In a sequence from 2018’s Solo: A Star Wars Story, the 76-year old Harrison Ford appears pretty realistically as his 35-year old self playing Han Solo in 1977.īoth examples were produced using artificial intelligence and machine learning tools to automate parts of the process but while one was made with the full force of Hollywood, the other was produced apparently by one person and uploaded to the Derpfakes YouTube channel.īoth demonstrate that AI/ML can not only revolutionise the VFX creation for blockbusters but put sophisticated VFX techniques into the hands of anyone. In Avengers Endgame, Josh Brolin’s performance was flawlessly rendered into the 9ft super-mutant Thanos by teams of animators at Weta Digital and Digital Domain.